Al-powered technology expanding rotorcraft potential

- Overwatch Imaging

- Jul 24, 2023

- 4 min read

Updated: Oct 2, 2023

Artificial intelligence (AI) is proving its value in rotorcraft operations globally.

By Jen Boyer

Vertical Valor: Summer 2023

Artificial intelligence (AI) is proving its value in rotorcraft operations globally. From helping reduce workload and response time in wildland fires to expanding rotorcraft mission possibilities, this technology is quickly defining the next evolution of the industry.

Technology with Al continues to come online at a rapid pace. Overwatch Imaging's AI-powered automated imaging and Near Earth Autonomy's beyond visual line of sight flight automation solutions are two leading edge examples.

This article is shared from Vertical Valor Magazine's summer 2023 edition. Full edition here: https://issues.verticalmag.com/554/766/1745/Valor-Summer-2023/

Overwatch Imaging:

As recently as seven years ago, wildfire bosses received once-daily maps compiled by the U.S. Forest Services' National Infrared Operations (NIROPS). An aircraft would fly over the fire at night and gather data on hot spots that was then put

together as a readable map and delivered the next morning to help decision-makers identify the day's plan of attack.

Today, thanks to technology like Overwatch Imaging's smart sensors, that same information is delivered in as little as 15 minutes and can be updated throughout the day or night, and as often as needed.

Overwatch Imaging was founded in 2016 by two aerospace engineers who saw value in improving and automating imaging capabilities from aircraft for faster delivery. They developed multiple sensor packages for a variety of aircraft that can capture critical data from the air in real time and, through the use of AI, automate the mapping process to deliver mission-critical images quickly.

In addition to wildland fire, Overwatch's products are used for maritime patrol, surveillance, search-and-rescue, disaster response, environmental mapping, and border patrol.

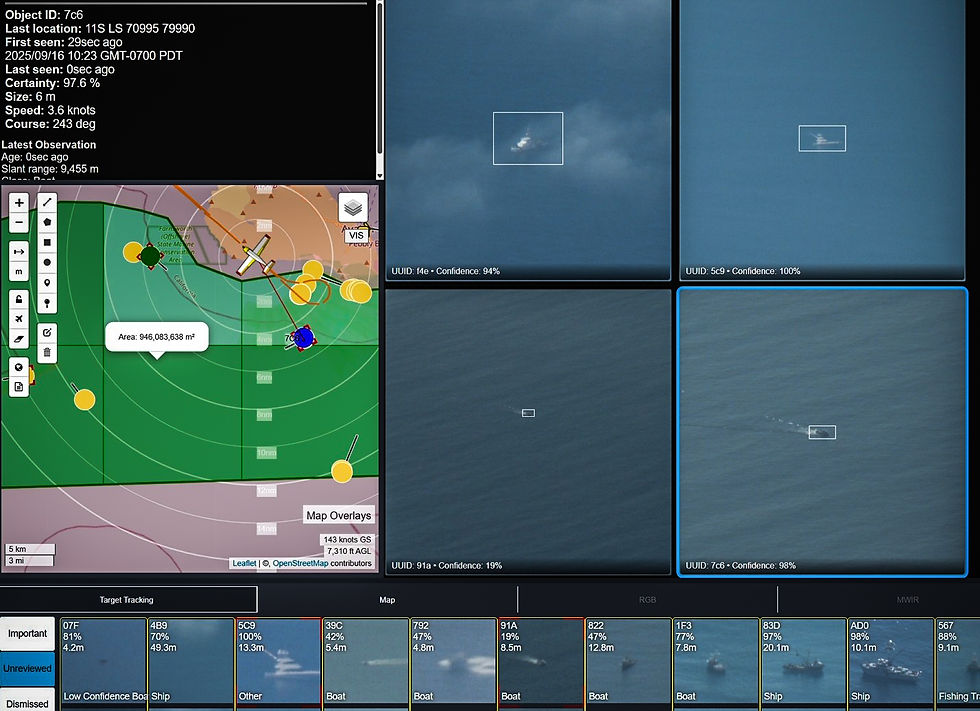

The company designed two series of smart sensor hardware and developed AI software to interpret and disseminate the data collected by the sensors. The PT series of sensors includes electro-optical/infrared (EO/IR) sensors that automatically scan, find, and detect objects of interest over wide areas. The TK series operate NADIR-oriented multispectral sensor systems optimized for high-quality imagery for wide-area, multidomain mapping and intelligence.

Both senor systems utilize Overwatch lmaging's AI software that automates sensor operation, processes the data, then delivers actionable intelligence based on the operator's need. An operator sets a GPS search area and the system automatically does the rest of the work. The sensors capture imagery in a precise scan pattern over the area. Overwatch Al processes, analyzes and relays image data and stitches those images together to create detailed, high-resolution georeferenced mosaics and maps with the multispectral imagery overlaid in real time.

"These systems have a number of valuable uses, but where we are seeing the most

use is fire detection, fire mapping, and ocean watch, which is maritime search and rescue, boat detection, and things like chat," said Jesse Thrush, Business Development Executive at Overwatch Imaging. "The TK series is ideal for that, given all the different sensors that allow for full high-resolution imagery. These multispectral imaging sensors have near infrared, and short-, medium- and long-wave infrared, as well as an RGB camera. You combine data from all of those to make a really clear mosaic of the fire."

As well, Thrush said, depending on which band of infrared is used, operators can see through smoke, dust, and light cloud layers and pick up even very faint heat. There is a different use for each band and with all of these bands in one sensor system, operators get a very good picture of what is happening with the fire.

"For maritime, you're looking for boats, whether they're lost or shouldn't be there," Thrush added. "Scanning the whole ocean is almost impossible with the human eye alone. That's what makes our Al so great. You set the search area and details. Let's say you're looking for a red boat that was lost. It will look for red boats. It can detect boats, depending on their size, up to 30 miles [50 kilometers] away."

Overwatch Imaging is also working to integrate with other third-party systems by loading its software onto chose systems to automate the search and detection function on the system's sensors.

Overwatch's systems come in a variety of sizes that depend on the mission and aircraft. Each is outfitted with sensors depending on the altitude and speed the aircraft will fly. The TK-7, for instance, includes an RGB camera and both near and long-wave infrared sensors for low- to mid-altitude operations, while the TK-9 is designed for the fastest and highest altitude operations and includes all infrared bands offered. The TK-8, ideal for helicopter operations and altitudes, includes all infrared band sensors with horizon-to-horizon rolling imagers for the widest imagery swath and weighs only 1l kilograms (24 pounds).

"All of our sensors can be customized depending on the mission, but if there is a budget constraint, we can also work with agencies to install our software to work with their current equipment," Thrush said.

Several modules are available for Overwatch AI that help focus on mission operations. These include fire detection, light mapping, machinery detection, and maritime search-and-rescue functions.

Cal Fire recently became a customer of Overwatch Imaging last year. The system was used extensively, especially during the devastating Mill Fire that destroyed parts of Weed, Lake Shastina, and Edgewood in September 2022.

"We utilized our aircraft with the Overwatch imager not only for mapping and updates to the fire, but it was most vitally important for understanding the devastation in the structure loss," sajd Phillip SeLegue, Deputy Chief of fire intelligence at Cal Fire. "Overwatch helped identify damaged and destroyed strucrures within the footprint of the incident at night. We were able to image the area, interrogate the data and [carry out] post-processing within a short turnaround to idenrify 118 structures within the footprint of the incident. Some of them we knew were damaged, some of them we knew were destroyed."

SeLegue said it took about six hours from the collection to pose-processing to understand and identifying those damaged and destroyed structures on the ground level, allowing teams on the ground to go in and survey for a more comprehensive report.

'The processing portion of it was vitally important to submit for the fire management assistance grant," SeLegue said. "That grant assists local jurisdictions and communities in rebounding from a devastating loss by recouping those monies and then actually getting not only state but federal assistance

to help in the recovery process."